Förderjahr 2017 / Science Call #1 / ProjektID: / Projekt: SEPSES

After some months of development, we are delighted to announce that our work on the Semantic LOG ExtRaction Template (SLOGERT) has been accepted for publication on the ESWC 2021 conference!

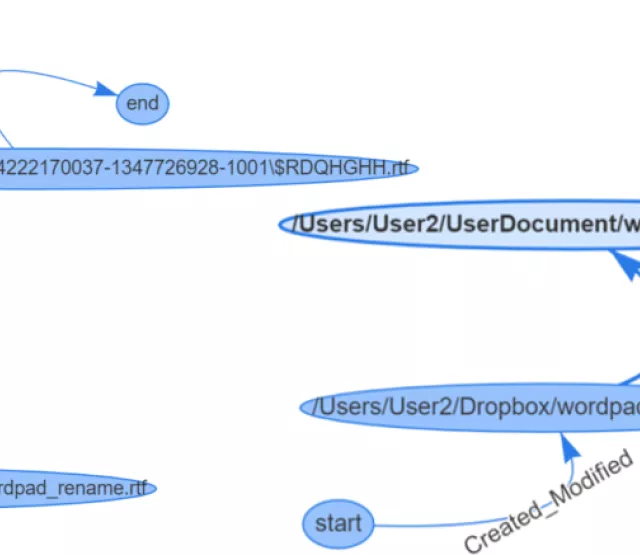

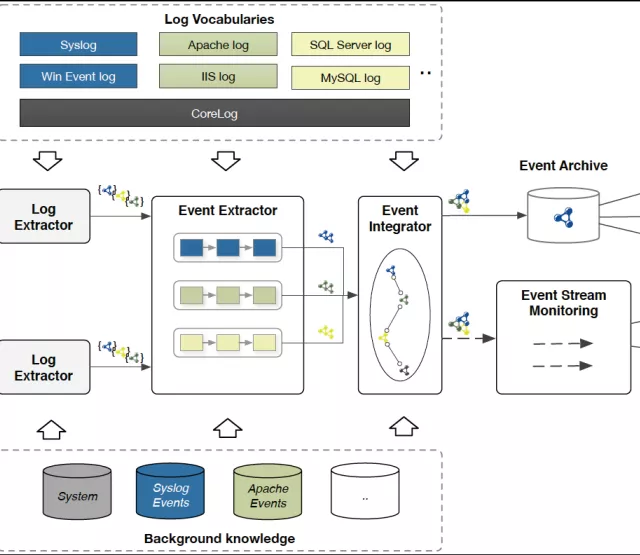

SLOGERT is an approach to (semi-)automatically transform raw log data, i.e., textual records of system events, into RDF graphs following a sequence of processes. SLOGERT supports automatic identification of rich RDF graph modelling patterns to represent types of events and extracted parameters that appear in a log stream. Analyzing raw log data typically involves tedious searching for and inspecting clues, as well as tracing and correlating them across unstructured, heterogeneous, and (potentially) fragmented log sources. The resulting Knowledge Graph (KG) enables analysts to navigate and query an integrated, enriched view of the events and thereby facilitates a novel approach for log analysis.

For this publication we also demonstrated the viability of this approach by conducting a performance analysis and illustrated its application on a large public log dataset in the security domain.

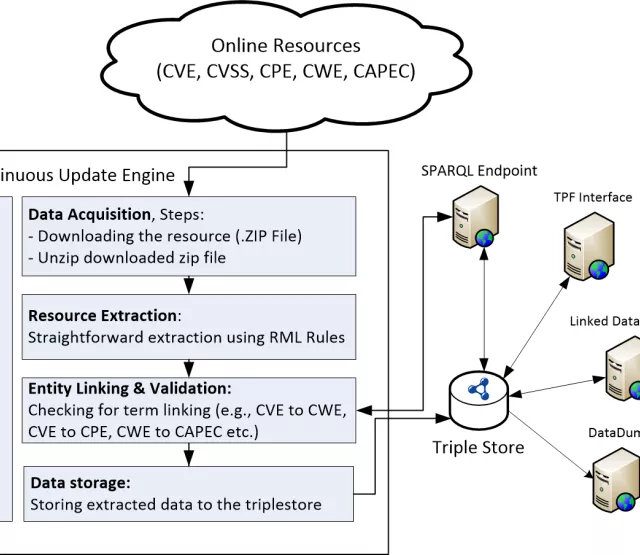

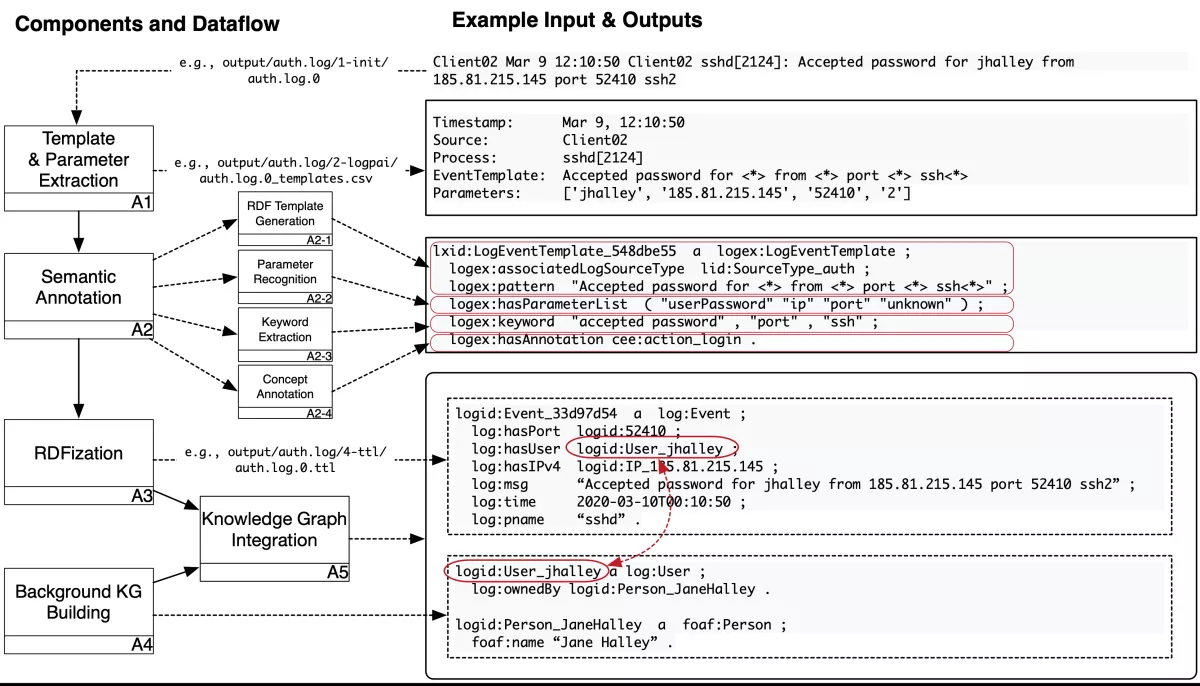

The SLOGERT workflow is visualized in the following figure and described briefly below:

- A1) Template & Parameter Extraction process takes in a log file and produces two files: (i) a list of log templates discovered in the log file, each including markings of the position of variable parts (parameters), and (ii) the actual instance content of the logs, with each log line linked to one of the log template ids, and the extracted instance parameters as an ordered list. For this process, we rely on LogPAI, a log parsing toolkit.

- A2) Semantic Annotation takes the log templates and instance data with the extracted parameters as input and

- (A2-1) generates RDF rewriting templates that conform to an ontology and persists the templates in RDF for later reuse,

- (A2-2) detects (where possible) the semantic types of the extracted parameters,

- (A2-3) enriches the templates with extracted keywords (A2-3), and

- (A2-4) annotates the templates with CEE terms (A2-4).

- A3) RDFization. In this step, we expand the log instance data into an RDF graph that conforms to the log vocabulary and contains one log file. We currently rely on the Lutra engine for the RDFization process.

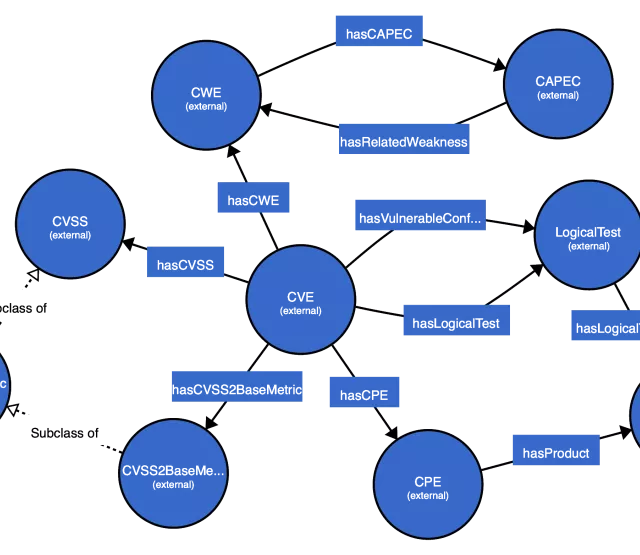

- A4) Background KG building, where we build a Knowledge Graph containing information relevant to the log KG produced from step (A3).

- A5) KG Integration combines the KGs from the previously isolated log files and sources into a single, linked representation. Here, we rely on the standardised instance URIs to connect data from step (A3) and (A4).

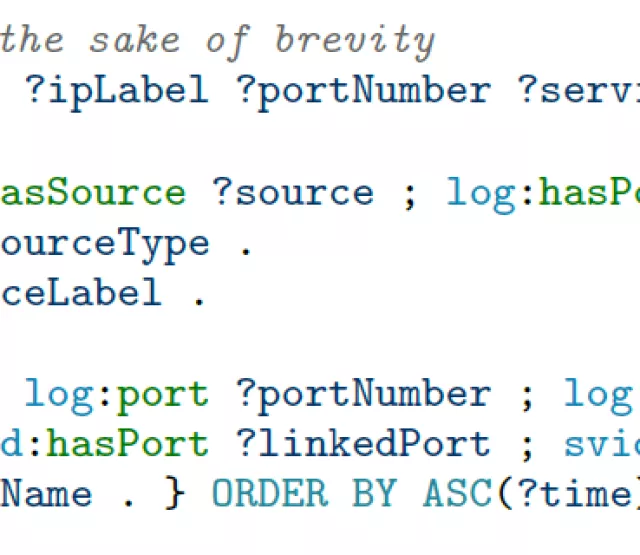

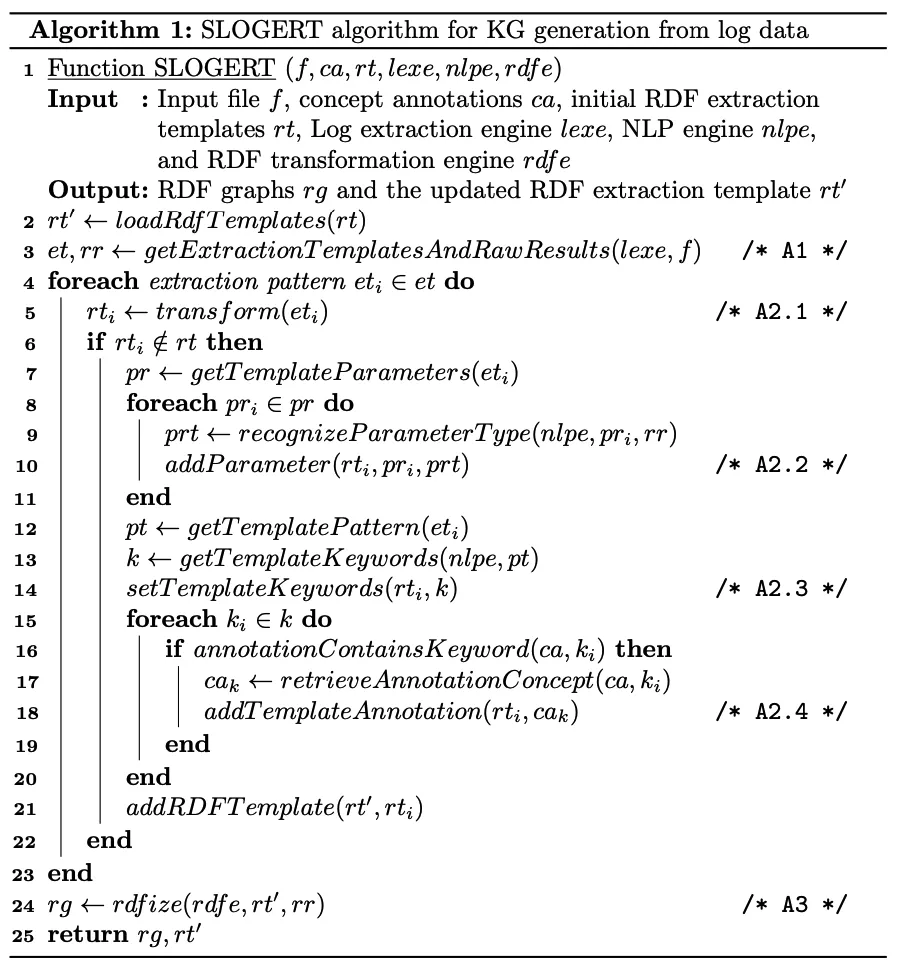

The following pseudocode of SLOGERT describes the main processes A1, A2, and A3 in technical detail.

You can access the source codes, examples, and further information about SLOGERT in our GitHub repository, and we are looking forward to comments and feedback!

Andreas Ekelhart