Förderjahr 2018 / Stipendien Call #13 / ProjektID: 3793 / Projekt: Data Management Strategies for Near Real-Time Edge Analytics

The problem of limited edge storage is mostly addressed considering traditional cloud-based database perspectives, including storage optimization and separately investigating data analytics approaches and system operations.

One can observe that even within a single edge analytics system:

- Ob1: IoT data are categorized into different model types representing multi-model data, such as near real-time streaming data and log-based data, thus, requiring different storage types and governance policies. They also include different level of significance regarding to storage and edge analytics where all applications and sensors do not have equal importance;

- Ob2: Different IoT sensors include various errors such as missing data, outliers, noises and anomalies, affecting the designs of edge analysis pipelines and corresponding different to decision making processes;

- Ob3: Data from different IoT sensors appear with different data generation speed, consequently producing different data volumes for the same time interval. Simultaneously, different types of monitored sensors require different data volumes to make meaningful analytics.

Currently all these highlighted issues are solved outside edge data services. Solutions for these issues can be customized and must be incorporated into the design of (new) edge data storage systems. These observations indicate crucial changes for traditional approaches, which have assumptions on consistent low latency, high availability and centralized storage solutions, and cannot be generalized to the edge storage services and unreliable IoT distributed systems. Elasticity for edge storage services is currently an unsolved problem. It is important to bring various features into the design of future (single) edge storage system.

An example for a traditional single analytics system

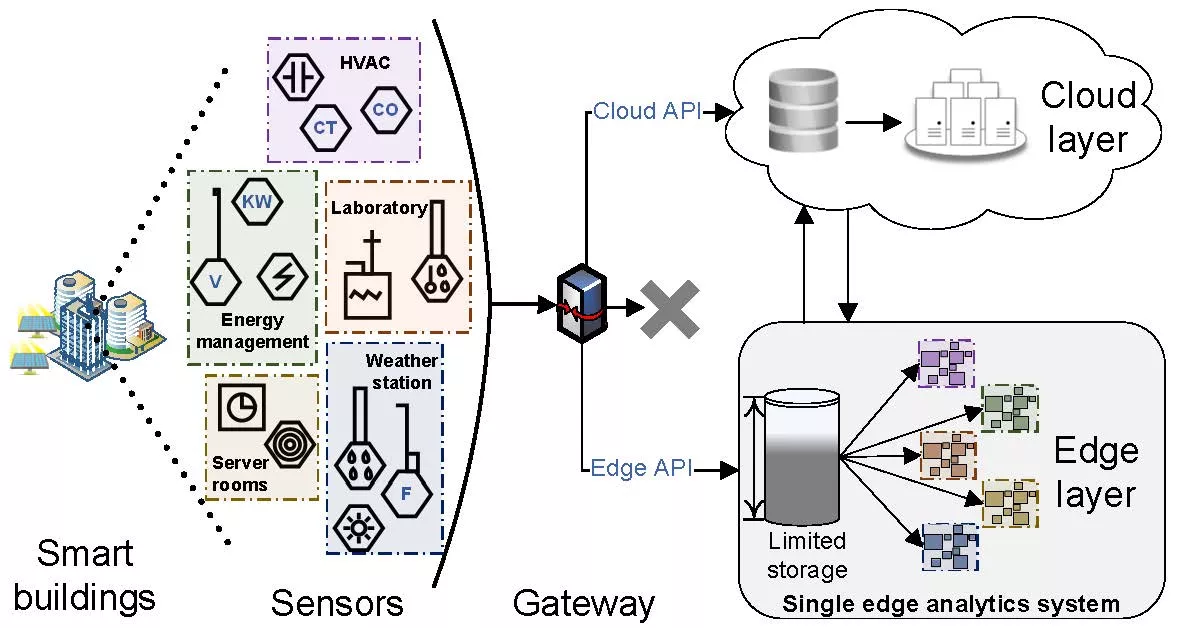

In the IoT sensor environment, such as an exemplified university smart building shown in Figure 1, we can observe data workloads from different IoT applications and decide whether to (1) push data to the cloud data storage, (2) keep relevant data for local edge analytics or (3) discard data if they are not useful for future analytics. In the first case, traditionally, all data are transferred to resource-rich cloud data centers where storage and compute intensive workloads can be handled, resulting in necessary control commands for IoT actuators. However, increasing data streams and latency requirements arising from IoT applications makes distant cloud data transfer often impractical. Recent solutions for making crucial fast decisions in IoT systems have brought up the second case employing edge nodes. In an IoT system, such as a university smart building equipped with many sensors measuring internal subsystems, it is obvious that data from HVAC (Heating, Ventilation, and Air Conditioning) sensors do not have the same importance as data from smart meters and solar panels essential for energy management (Ob1); incomplete data from weather stations can occur due to external conditions while missing data coming from server room sensors can be caused by some internal failures (Ob2); an energy management subsystem has higher data generation frequency than a laboratory subsystem (Ob3). Accordingly, each of these subsystems requires different approach to sensor data analysis, although the same edge storage system is used to integrate data for edge analytics. In addition, limited storage capacities at the network edge prevents us from keeping all generated data. In the third case, due to the limited underlying network infrastructure, some data can be filtered or reduced to save bandwidth usage and storage space but impacting later degradation of Quality of Service (QoS) and causing data integrity problems.

Edge deployed systems must maintain multi-model data

On the one hand, generated by sensors, metrics and events as time series might conform a common IoT data model. On the other hand, it is important to support other data types across different data stores by triggering certain functions, maintaining logs for state information of IoT devices/equipment, data exchange and combination between different storage types. Thus, considering present multi-model data, resource-limited edge nodes, and complex data management at the network edge, the next generation storage service must deal with many trade-offs to make sure that the edge analytics is always served with most relevant and suitable data. Edge analytics have to meet certain quality of analytics, including amounts of data available, timely decisions and certain levels of data accuracy.

Therefore, developing such reliable and elastic edge storage services is of paramount importance. From an architectural design viewpoint, we must identify which data should be kept at the edge nodes, how long to store them, and which data processing utilities can assist these problems.